Maybe-beliefs

We’re taught there are 2 kinds of beliefs: true ones and false ones. And our job is to eradicate the false ones and only have true ones.

There’s nothing wrong with that in theory — but in practice, questions like “what is truth?” and “how do we know what’s true?” make this impossible.

As a result, we’re pursuing an impossible goal, using high-effort and futile methods that have little relevance to our daily lives, and then easing our anxiety about it all by pretending we’ve actually achieved that impossible goal.

That makes our belief landscape look like this:

When we believe and disbelieve this way, we feel certain about our chosen option, and certain everyone else is wrong. We put all our eggs in one basket, and feel alienated from our friends and family who disagree. And when we’re not exactly right — which is most of the time — we’re in denial about it until the very last moment.

Maybe-beliefs

Here’s a different approach that’s worked well for me:

For the sake of this illustration, there are 2 kinds of belief: maybe-beliefs, and operative beliefs. Maybe-beliefs are ideas you are willing to allow to be true, without preference, just in case they turn out to be true. Operative beliefs are the beliefs on which you base real-world actions and decisions.

Maybe-beliefs don’t have to affect your life at all. They can exist only in your mind. Nobody needs to know you’re okay with the possibility that Mark Zuckerberg is literally a 5th dimensional demon lizard. It won’t affect your life, unless it becomes the reason you take an action. In the meantime, you will be open to the possibility, so that if evidence starts to pile up, you’ll be calm and ready while everyone else is surprised, disoriented, or in denial.

Being wrong about maybe-beliefs is low-risk — it doesn’t really affect your life. Only being wrong about the operative beliefs — the ones you “bet on with action” — affect your life. And allowing yourself to entertain all possibilities should make it significantly easier to make good bets when it’s time to act.

This approach means you don’t need to spend a ton of time analytically distinguishing truth from falsehood. Instead:

Have no preference

Let evidence accumulate

See what emerges in the absence of preference

Hedge your bets when action is required

From this angle, truth-seeking feels more like “relaxed observing” than “rigorous investigating.”

“Having no preference” is the hard part, and that’s why the emotional weightlifting aspect of truthloving is so important.

Ideamarket announcement

This post pairs well with the new product we’ve just soft-launched for Ideamarket (the startup I founded to solve fake news).

Ideamarket invites you to express your confidence about important issues on a scale of 0-100.

Sounds kind of like the above — only it’s on-chain, permanent, and networked so you can see how the world’s beliefs influence each other.

The goal is to create a public track record of personal opinions, so that we can see who was right when it counted, and figure out who to trust instead of media corporations.

If you’re comfortable using crypto, I’d love to have you try it and share feedback! We’re in iterating mode, so whatever you like or don’t like, let me know.

In any case, here are…

11 things I’m excited about re: Ideamarket

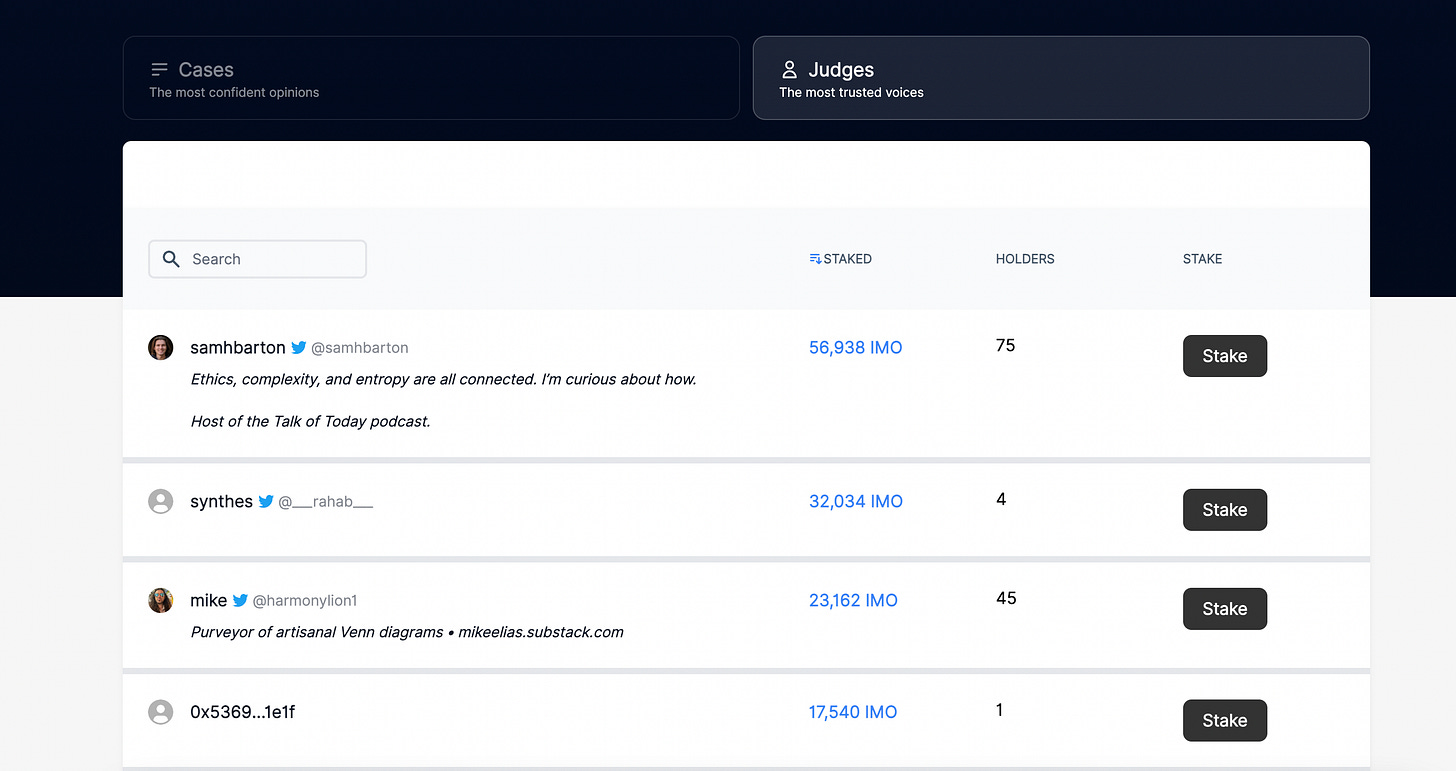

1. Information is judged by users "elected" by staking instead of by media corporations

Think of it as Delegated Proof-of-Stake for public knowledge management.

2. Every post is a node.

When you make a new post, you're contributing to the knowledge available to the whole ecosystem.

3. Every post is an NFT.

If your post gets rated by influential people, it will be like owning a baseball signed by the 1927 New York Yankees, or a bill passed by the US Senate. Especially if it ends up influencing culture in some way.

(Yes, you can sell your posts on OpenSea.)

4. Your “portfolio of beliefs”

Your profile displays all your opinions.

Visitors can sort by Rating to see your most confident opinions, or by Scrutiny to see your takes on the most controversial issues.

Here’s mine: https://ideamarket.io/u/mike

All of the above are live now. Here’s where we’re going:

5. Prediction markets for public opinion.

Make a bet that "Epstein didn't kill himself"

will have an IMO rating above 50

on Jan 1, 2024.

Bettors will be incentivized to cite the best evidence, understand both sides, and judge honestly.

Do you think something important is being suppressed? Wager that public opinion will catch up to you, and watch the evidence on both sides pile in.

Psychic abilities are real

IMO rating above 50

on Jan 1, 2024

This can be done today.

6. Venn diagram of all beliefs 2 (or many) users have in common

"Show all posts rated above 50 by both Mike and Joe"

"Show all posts rated above 50 by these 10 users"

7. Community belief analysis

"Show the posts BAYC holders rated most highly"

"Show the posts USDT holders rated most highly"

8. Load-bearing belief analysis

"Show most-cited posts"

"Show most-cited posts for category=health"

9. Epistemic vampire attacks

Identify highly-cited post

Gather a bunch of people to prove it wrong

This puts pressure on users who cited it to cite something else instead, or update their rating (i.e., change their mind in public)

10. Belief graph for each user

See each user’s opinions, confidence, and citations, networked together into an argument map

11. Google Maps for human knowledge

The top arguments for all beliefs.

Networked so you can see their dependencies.

Timestamped so you can watch them evolve. Replay the evolution of human opinion like it’s weather radar.

All data is open-source and can be visualized.

If any of that was confusing, check out the brief intro here — and if that doesn’t help, please let me know!

Thank you <3

Ideamarket is the culmination of my life’s work up to this point. Please do check it out and if you can, give it a spin.

Cheers,

—Mike

Thank you again sir. The world is slowly receding back into cautious optimism. I am doing my best to further refine optimism into relaxed observational maybes, but the struggle is real. Old habits die hard. Death is terrifying. Life perhaps more so. Your kind and patient tone is infinitely appreciated.